In this installment, the value cluster is given tools that do the heavy lifting. Both an LAM/MPI and SGE (Sun Grid Engine) will be installed and tested. Other nifty tools will be discussed as well.

In the last part of our story, we were about to name the key witness in the, no wait, wrong story. We talked about installing Warewulf on the master node and then booting the compute nodes. At the end of the article we were able to boot all of the nodes and run wwtop to see that the nodes were alive and breathing.

While the cluster was up and running, it didn't have all of the "fun" toys yet. In particular, we wanted to run the Top500 benchmark (HPL) and see where we placed on the list (for the serious readers that is joke). Before we do that, however, we need more software installed and more importantly we need to know if everything is working correctly.

As you may recall, in part one we discussed the hardware and in part two we installed the Warewulf software. So if you have been looking at wwtop for a while, we are here to get more of those lights blinking. We are going to do some configuration and some package installation. In the end, you will have a good grasp of how your cluster is running and be able to run programs from the command prompt and by using a batch queuing system. Along the way, you will also get a better appreciation for how the Warewulf software actually works. Our cluster is named kronos, so if you are following along references to kronos will mean "your cluster".

Our own VNFS

Because we are going to start changing things, let's first create our own VNFS (Virtual Node File System) and keep the default as a backup. As you may recall the VNFS is the filesystem image that is used to make the RAM disk image used by Warewulf. We decided to name the VNFS, kronos (creative aren't we?). So first, we modified the file /etc/warewulf/nodes.conf to use our VNFS. We changed the line,

vnfs = defaultto reflect our cluster name,

vnfs = kronosThen we modified the file /etc/warewulf/vnfs.conf to reflect the changes we wanted. These changes tell the build scripts what we want in the VNFS and modules we want with the kernel. The changes are shown in Sidebar One.

| Sidebar One: Kronos vnfs.conf |

|

The following the our new vnf.conf file. We added the sis900 modules and removed some modules we will not need. We then added a entry for a kronos VNFS and set it as default. Notice the different ways you can configure the VNFS. We will explain more of these options in the text.

kernel modules = modules.dep sunrpc lockd nfs jbd ext3 mii crc32 e100 e1000 bonding sis900

kernel args = init=/linuxrc

path = /kronos # relative to 'vnfs dir' in master.conf

[generic]

excludes file = /etc/warewulf/vnfs/excludes

sym links file = /etc/warewulf/vnfs/symlinks

fstab template = /etc/warewulf/vnfs/fstab

[small]

excludes file = /etc/warewulf/vnfs/excludes-aggressive

sym links file = /etc/warewulf/vnfs/symlinks

fstab template = /etc/warewulf/vnfs/fstab

[hybrid]

ramdisk nfs hybrid = 1

excludes file = /etc/warewulf/vnfs/excludes-nfs

sym links file = /etc/warewulf/vnfs/symlinks-nfs

fstab template = /etc/warewulf/vnfs/fstab-nfs

[kronos]

default = 1 # Make this VNFS the default

excludes file = /etc/warewulf/vnfs/excludes-aggressive

sym links file = /etc/warewulf/vnfs/symlinks

fstab template = /etc/warewulf/vnfs/fstab

|

Now that we have defined our VNFS, let's create it! Run the following command to create the VNFS:

# wwvnfs.createThis command will populate the /vnfs/kronos directory with a new file system. You can look in the directory /vnfs/kronos to see what the command did. To build the actual image that will be used for the nodes from this VNFS, run the following command:

# wwvnfs.buildIn the /tftpboot directory, you will see the file kronos.img.gz which is the image file for the compute nodes.

Install PDSH

Before we use our new kronos VNFS image, we want to install a handy package. The default installation of Warewulf did not include pdsh, which stands for parallel distributed shell. The pdsh package allows us to issue commands to all (or some) of the nodes at one time. It is only required to install the pdsh package on the master node. As an aside, pdsh is probably most useful to root. While users may find pdsh useful for getting node information, there is usually no reason why users should be running programs on the cluster using pdsh.

First download the file, pdsh-1.7-9.caos.src.rpm and rebuild the rpm using the command rpmbuild --rebuild pdsh-1.7-9.caos.src.rpm. Install the binary rpm (look in /usr/src/redhat/RPMS/i386) on the master node using rpm -i pdsh-1.7-9.caos.i386.rpm .

The pdsh package needs to know the node names of you cluster. These names can be on the command line or in a file. For convenience, let's create a file call kronos-nodes in /etc/warewulf that lists the nodes kronos00-kronos06 (one per line). This file is a list of all the nodes in your cluster (not counting the master node).

Then, as root in your .bashrc file you should add the following:

export WCOLL=/etc/warewulf/kronos-nodesThe WCOLL variable is used by pdsh to find the list of machines in your cluster. To test pdsh, run the following command, pdsh hostname. The output should look something like the following.

kronos00: kronos00 kronos02: kronos02 kronos04: kronos04 kronos03: kronos03 kronos01: kronos01 kronos05: kronos05 kronos06: kronos06Note that the order of output is not the order in which the nodes were specified in the kronos-nodes file. This effect is often due to the parallel nature of clusters. Many things are happening at once and delivery of results may not be in an order you expected.

Changing Modules Options on Nodes

One of the important things we will want to be able to do is change some parameters on the nodes. One of the most important is module options. We chose to create our own VNFS so we could tune the system. In particular, the Gigabit Ethernet has several module options that may come in handy. To begin, make a backup of the modprobe.con.dist file in the /vnfs/kronos/etc directory. We can then make changes to the nodes by editing the original file.

As an example, we wanted to experiment with the settings on the GigE cards. We did some experimentation with various settings for the driver. One in particular was the Interrupt Throttle Rate. We found that leaving this at a default value (8000) gave us rather high latency (~65 &mu s). However, turning off interrupt throttling cut the latency in half (~29 &mu s). Of course this means the CPU is doing more work servicing interrupts, but in some cases the lower latency is important.

To change the options for the e1000 cards (GigE) you need to modify the /vnfs/kronos/etc/modprobe.conf.dist file by adding the following line to the end of the file.

options e1000 InterruptThrottleRate=0 TxIntDelay=0And, make sure you add it to the /etc/modprobe.conf on the host as well!

For these changes to take effect on the cluster, you have to rebuild the VNFS, and reboot the nodes. Run the following commands on the master nodes to rebuild the VNFS.

# wwvnfs.build # wwmaster.dhcpd

We are now ready to reboot the nodes. Rather than pushing reset buttons or typing a bunch of commands, let's use pdsh. Enter the following:

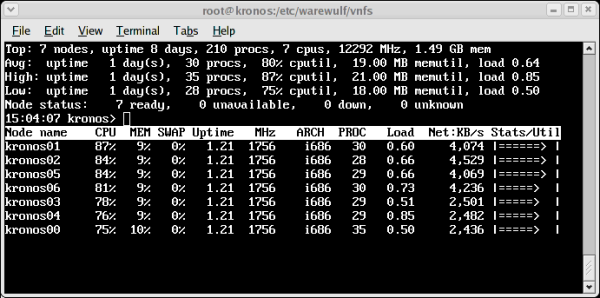

# pdsh /sbin/rebootUse the Warewulf command, wwtop (See Figure One) to check that the nodes have come back up. When nodes are off or unreachable you will see a "DOWN" listed the "Stats/Util" column. As nodes reboot the "DOWN" should change to "NOACCESS". When all the nodes are showing "NOACCESS" the cluster is ready for user accounts. Run the command, wwnode.sync to push the accounts to all of the nodes. Note, on kronos we have found that some nodes seem to take a long time to reboot as they get stuck in the shutdown process. We're looking into this problem. If this happens you can press the reset button on the node as there is no penalty for not shutting down the filesystem.

It is also most effective if you make the same module changes for the master node. Reloading the e1000 module on the master node can be accomplished by issuing the following commands (change eth1 to reflect your cluster interconnect).

# ifdown eth1 # rmmod e1000 # modprobe e1000 # ifup eth1Also, we do not want to twiddle with the on-board Fast Ethernet service network as it is used to boot and manage the nodes.

To check the Ethernet module options on the master node, run dmesg | grep e1000. The command will give all of the details about the GigE NIC (Intel e1000 NIC) that are in the dmesg file. The new setting should be noted in this file. To check the Ethernet module settings on the compute nodes, run pdsh dmesg | grep e1000 command using pdsh.

At some point you may want to change the MTU (size of the Ethernet packet) To accomplish this step, first change the MTU line in the files /vnfs/kronos/etc/sysconfig/network-scripts/ifcfg-eth1 and /etc/sysconfig/network-scripts/ifcfg-eth1 to the value that you want. The first file is for the VNFS and the second is for the master node. Note that the kronos VNFS ifcfg-eth1 file may not exist. Just create the file and add the MTU setting as such as:

MTU=6000Note, MTU sizes can normally range from 1500 (default) to 9000 bytes. The easiest way for this to take effect is to rebuild the VNFS and reboot nodes as we did a above. Then reset eth1 on the master node.

# ifdown eth1 # ifup eth1After this, you can reboot the compute nodes using pdsh (see above) and watch the progress using wwtop. Don't forget to enter wwnode.sync when the nodes are ready (showing "NOACCESS"). The nodes should be back up and ready for production. You can also check the MTU setting on the master node by issuing a ifconfig eth1 | grep MTU and on the compute nodes by issuing pdsh /sbin/ifconfig eth1 | grep MTU.

These two exercises should give you feel for making changes to both the master and the nodes (through changing the VNFS).