pNFS

Currently, a number of vendors are working on version 4.1 of the NFS standard. One of the biggest additions to NFSv4.1 is called pNFS or Parallel NFS. When people first hear about pNFS they sometimes think it is an attempt to kludge parallel file system capabilities into NFS, but this isn't the case. It is really the next step in the evolution of the NFS protocol that is a well planned, tested, and executed approach to adding a true parallel file system capability to the NFS protocol. The goal is to improve the performance and scalability while making the file system a standard (recall that NFS is the only true shared file system standard). Moreover, this standard is designed to be used with file based, block based, and object based storage devices with an eye towards freeing customers from vendor or technology lock-in. The NFSv4.1 draft standard contains a draft specification for pNFS that is being developed and demonstrated now. A number of vendors are working together to develop pNFS. For example,

to name but a few of those involved. Obviously the backing of large vendors means there is a real chance that pNFS will see wide acceptance in a reasonable amount of time.

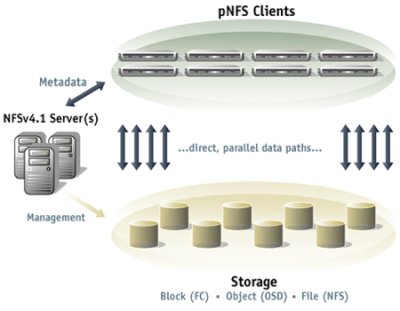

The basic architecture of pNFS looks like the following (from the www.pnfs.com website):

The architecture consists of pNFS clients, NFSv4.1 Metadata server(s), and one or more storage devices. These storage devices can be block based as in the case of Fibre Channel storage, object based as in the case of Panasas or Lustre, or file based in the case of NFS storage devices such as those from Netapp. There is also a network connecting the NFSv4.1 Metadata server(s) with the clients and the storage devices as well as network connecting the clients and the storage devices.

The pNFS clients mount the file system in a similar manner to NFSv3 or NFSv4 file systems. When they access a file on the pNFS file system they make a request to one of the NFSv4.1 metadata servers that passes back what is called a layout to the client. A layout is an abstraction that describes details about the file such as permissions, etc, as well as where a file is located on the storage devices and what capabilities the clients have in accessing the storage devices where the file is located (read) or to be located (write). Once the client has the layout, it accesses the data directly on the storage device(s) removing the metadata server from the actual data access process. When the client is done, it sends the layout back to the metadata server in the event any changes were made to the file. The metadata server also acts as the traffic cop in the event that more than one client wants to access a file. It controls permissions on the file and grants capabilities to clients to write or read to the file. This enforces coherency on the file allowing more than one client to read or write to the file at the same time.

If you have one or more storage devices, the clients can access all of them in parallel but only uses the devices where the data is stored or to be stored. This is the parallel portion of pNFS. If you want more speed, you just add more data storage devices (more spindles and more network connections) and make sure the data is spread across the devices. Moving the metadata sever out of the direct line of fire during file operations also improves speed because the bottleneck of the metadata server has been removed once the client is granted permission to access the file and knows where the data is located.

The client needs a layout "driver" so that it can communicate with any one of the three types of storage devices or possibly a combination of the devices at any one time. How the data is actually transmitted between the storage devices and the clients is defined elsewhere. The "control" protocol show in Figure Four between the metadata server and the storage is also defined elsewhere. The fact that the control protocol and the data transfer protocols are defined elsewhere gives great flexibility to the vendors. This allows them to add value to pNFS to improve performance, improve manageability, improve fault tolerance, or any feature they wish to address as long as they follow the NFSv4.1 standard.

If you haven't already noticed one of the really attractive features of pNFS is that it avoids vendor lock-in and technology lock-in. This is in part due to pNFS being a standard (if it is approved) in NFSv4.1. In fact it will be the only parallel file system standard. So vendors who follow the standard should be able to inter-operate, which is what all customers want. So theoretically a system may have a pool of object based storage, file based storage, and block based storage, and have the pNFS clients all access this storage pool. This allows you, the customer, to choose whatever storage you want from whichever vendor you want as long as there are layout drivers for it.

So why should vendors support NFSv4.1? The answer is fairly simple. With NFSv4.1 they can now support multiple OS's without having to port their entire software stack. They only have to write a driver for their hardware. While writing a driver isn't trivial, it is much easier than porting an entire software stack to a new OS.

Parallel NFS is on its way to becoming a standard. It's currently in the prototyping stage and interoperability testing is being performed by the various participants. It is hoped that sometime in late 2007 it will adopted as the new NFS standard and will be available in a number of operating systems. Also, Panasas has announced that they will be releasing key components of their DirectFlow client software to accelerate the adoption of pNFS.

If you would like more information please go to the Panasas website to see a recorded webinar on pNFS. If you want to experiment with pNFS now, the Center for Information Technology Integration (CITI) has some Linux 2.6 kernel patches that use PVFS2 for storage. Finally, Panasas has created a website to provide documentation, links, and hopefully soon, some code for pNFS.

That is all for part one. Coming Next: Part Two: NAS, AoE, iSCSI, and more!

I want to thank Marc Ungangst, Brent Welch, and Garth Gibson at Panasas for their help in understanding the complex world of cluster file systems. While I haven't even come close to achieving the understanding that they have, I'm much better than I when I started. This article, as attempt to summarize the world of cluster file systems, is the result of many discussions between where they answered many, many questions from me. I want to thank them for their help and their patience.

I also hope this series of articles, despite their length, has given you some good general information about file systems and even storage hardware. And to borrow some parting comments, "Be well, Do Good Work, and Stay in Touch."

A much shorter version of this article was originally published in ClusterWorld Magazine. It has been greatly updated and formatted for the web. If you want to read more about HPC clusters and Linux you may wish to visit Linux Magazine.

Dr. Jeff Layton hopes to someday have a 20 TB file system in his home computer. He lives in the Atlanta area and can sometimes be found lounging at the nearby Fry's, dreaming of hardware and drinking coffee (but never during working hours).

© Copyright 2008, Jeffrey B. Layton. All rights reserved.

This article is copyrighted by Jeffrey B. Layton. Permission to use any

part of the article or the entire article must be obtained in writing

from Jeffrey B. Layton.