Second Prototype

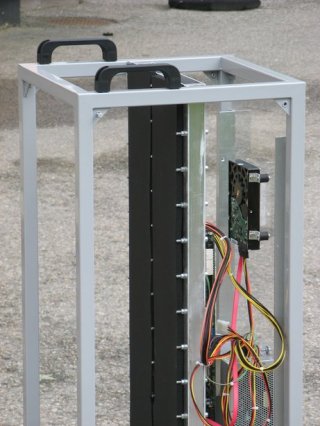

The first prototype was made of ordinary desktop components, although inexpensive these components have some drawbacks (lack of support for ECC memory perhaps being the biggest). After completing the first prototype we decided to build a more "professional" system. As mentioned in the introduction we chose a system based on Quad-Core AMD Opteron Processor 2376 HE and a Tyan mainboard: Thunder n3600W (S2935) (again, AMD Sweden is greatly acknowledged for providing us with the CPUs). This time we wanted a completely fan less operation, and we designed a system with two cooling channels. However, in an effort to save some costs, thus far we have only built "half" of the system; one channel, and one mainboard. Since we insulate the free side of the channel we are able to test the cooling capabilities of the system without actually having to buy all the hardware, the temperatures will be the same if the second channel, with the attached mainboard, is added. In this design we did experience some problems with high temperatures at another location on the mainboard (i.e., not the CPU). This issue could be addressed by also letting this part of the mainboard be in thermal contact with the cooling channel. This could have been complicated, but instead we chose to exchange the small heat sink shipped with the mainboard for a bigger one, which solved the problem. In this system we could not use the small PicoPSU due to the power requirements of the processors. Although they do not have fans, the PSU used in this system are quite bulky, and hence the system is unnecessary large (there is some "air" in the system). Some pictures of the new design can be seen below in Figures Eight and Nine.

Conclusions and future work

Most people have come to expect a high noise level as a "feature" of HPC. With the two systems we have built thus far we have shown that this is not necessarily true. We think that system like these - maybe extended with a few more mainboards - could be useful when there is need for powerful computational resources, but when it is not a viable alternative to place them in a dedicated computing center (due to e.g., economic, confidential or "status" issues).

We started out by building small HTPCs, and the cooling technique we implemented is perfectly adapted to this area as well, i.e., systems using this technique can be small, silent and efficient at the same time.

Furthermore, we see no reason why this technique could not be applicable to larger systems. Of course, in this case some care has to be taken in the design of the cooling channel. The higher the channel, the larger the insulating effect of the boundary layer will be. For example, in our two PHPC prototypes we have seen slightly higher temperatures on the two top CPUs. However, the influence of the boundary layer can be taken care of by extending the channel with an internal heat conducting structure, and we intend to do detailed numerical simulations to evaluate possible designs. In this area, the reduced noise, although certainly a good thing, is not the main issue. Instead it is the reduced cost for air conditioning that can be obtained by using a cooling channel. These savings are possible because the heat generated by the CPUs is separated from the air in the room to a much higher extent than what is done today in a traditional cluster.

Jon Tegner can be contacted at tegner (you know what to put here) renget.se