How to Benchmark TCP/IP Ethernet Performance

There are two ways to look at designing HPC clusters. On the positive side, there is a plethora of hardware and software options. On the negative side, there is a plethora of hardware and software options! The cluster designer has a special burden because unlike putting together one or two servers for the office, a cluster multiplies your decisions by N, where N is the number of nodes. A wrong decision can have two negative consequences. First, a fix for the problem will probably require more money and time. And, second, before the problem is fixed you may only be getting a fraction of the performance possible from your cluster. Clusters have a way of amplifying bad decisions.

All is not lost, however. Choosing the right stuff is not that difficult. It takes some investigation, some testing, and asking the right questions. As an example, let's look at a scenario where you would like to retro-fit an older cluster with Gigabit Ethernet (GigE) or even build a new cluster using GigE. We are not going to do an exhaustive review of all GigE adapters, but rather demonstrate how one might benchmark a specific adapter. GigE adapters may also be integrated on motherboards in which case it is a good idea to test these as well. The common assumption that an on-board GigE adapter will run better than a separate adapter may not necessarily be true. Ultimately your application(s) should determine the hardware, but before getting too specific, we can develop a set of general protocols to help narrow down the hardware and software choices.

How Low Can We Go?

After some investigation, we see that GigE adapters seem to come in two flavors, a 32 bit version for workstations and a 64 bit version for servers. There also seems to be a large price difference. A quick check on web shows us that workstation adapters can be purchased for about $35 (US) and server adapters for about $115 (US). The price difference can be substantial for a large number of nodes. Of course, we know that the more expensive 64 bit adapter is much better than the silly little 32 bit workstation part. Are you sure?Before you bet your career on an assumption, why not do some testing. Indeed, let's probe a 32 bit adapter to see just how it measures up. Turning to the Internet again we find that the Netgear GA302T seems to be a good choice. It is based on a Broadcom chipset, works at both 33 and 66 MHz, and can be purchased for about $32 (including shipping!). Note: Since writing this article, the GA302T has gone out of production, which is all the more reason to use this article as a guide for testing Ethernet adapters!

The Test Set-up

To perform the tests, we will connect the two Netgear GigE adapters using a CAT 5e crossover cable between. We will use two servers that contain Supermicro P4DC6 motherboards with dual 1.6 GHz Xeons and 1 GB RAM. The motherboards have both 32 bit/33 MHz and 64 bit/66 MHz PCI slots. It should be noted, that the Netgear 32 bit adapter is designed to work in both the 33 and 66 MHz slots. The test servers are connected via the on-board Fast Ethernet connection so the GigE interface can be changed without loosing communication between the nodes. The test software includes Linux kernel 2.4.21, a program called Netpipe, and Gnuplot for plotting data (See Resources Sidebar).Our testing checklist includes the following:

- Download and compile the most recent kernel (which usually includes the most recent adapter drivers).

- Bring up the GigE adapters (see Sidebar One).

- Download and compile Netpipe on both machines.

- Download and compile Gnuplot if needed. Gnuplot is included in most Linux distributions.

When all the above is complete, the testing can begin. Once the two adapters are up and running, using Netpipe is quite easy, see the Running Netpipe Sidebar. It should be mentioned that Netpipe can test TCP, MPI, and PVM performance. Newer versions can also test many popular high performance interfaces as well. For the purposes of this column, we will measure the TCP performance. It is best to perform the tests as root as you will be taking the interface up and down quite a bit.

As part of out testing we would like to answer the following questions.

- What is the single byte latency?

- How do the 33 and 66 MHz results compare?

- Can increasing the MTU help performance?

To answer these questions we will place the adapters in both the 32 bit/33 MHz and 64 bit/66Mhz slots. We will also vary the MTU size.

The Results

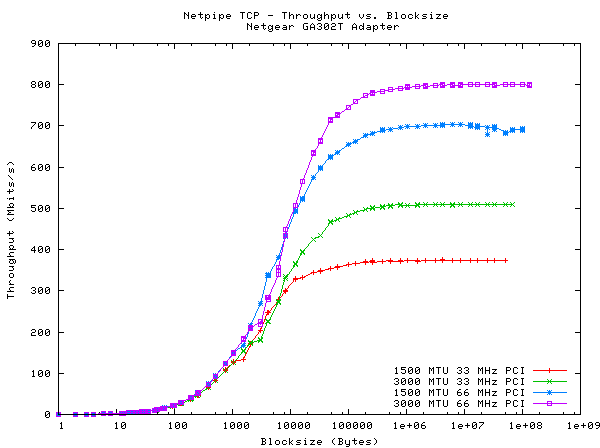

A summary of results is shown in Figure One (See Sidebar Three for instructions on how to plot the data using gnuplot). Both the 33 MHz and 66 MHz PCI slots were used with a standard 1500 byte MTU and then a 3000 byte MTU. The latency is a respectable 35 microseconds for all tests, however, the throughput for large block payloads can vary by a factor of two or more depending on the PCI slot and MTU size. It is clear that the best throughput (800 Mbits/sec) was recorded for the 66 MHz slot and the 3000 byte MTU. However, a closer look at the graph shows varying results for the 1000 to 10,000 byte payloads. Indeed, the 1500 byte MTU seems to provide better performance in this region. If your application transfers a large amount of data of this size, you may see better results with a stand 1500 byte MTU. MTU sizes greater than 3000 are not reported because with large MTUs the interface would stop working after a certain block size.

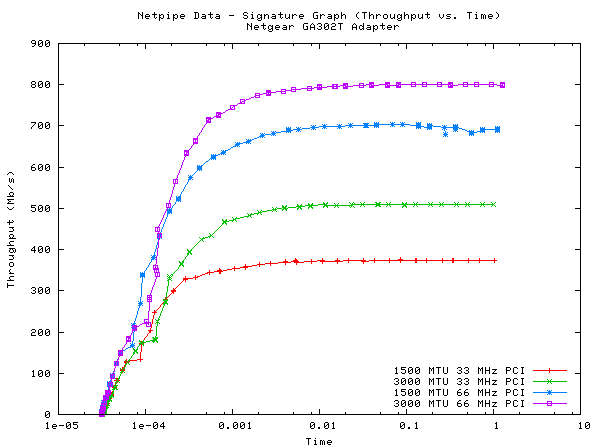

Click here for a larger version of Figure One. The Netpipe authors also suggest plotting a "Network Signature Graph" (throughput vs time). This result is shown in Figure Two. A larger version of Figure Two is available here.

Conclusions

In regards to the low cost Netgear GA302T, we can say that it is was a very capable adapter while it lasted. Currently the Intel Pro/1000 MT is good choice for a low cost adapter. Looking at the results, we found that tuning the MTU size to the application may improve performance. We also found a limit to increasing the MTU size even though the driver and chipset are supposed to support MTU sizes up to 9000 bytes.Netpipe is a great tool for testing the network performance of your cluster designs. Of course, there are many other factors we did not consider such as motherboards, chipset implementation, adding an MPI or PVM layer, and introducing a switch. Fortunately the effect of all these variables can be easily measured with Netpipe. Finally, you will notice that this type of information is not normally part of the product literature. Without proper testing, design decisions based on product data sheets and glossies is at best a guess and at worst a costly mistake. In future articles, we will examine other issues that influence cluster price and performance.