I feel the need for PVFS speed

In a previous column I looked at the various ways to code for PVFS1 and PVFS2. However, I never really discussed how to architect a PVFS system for your application. A correct PVFS design can improve the I/O performance of your application, ease administrative burden, improve flexibility, and improve the fault-tolerance. In this column I'll examine how to enhance and tune performance.

When we discuss performance enhancements for PVFS, there are three issues to think about: the network to the I/O servers, the number of I/O servers, and the local disks in the I/O servers. For each of these issues there are a number of decisions to be made. Hopefully this column will start you on the road to making these decisions.

Networking to the I/O Servers

Recall that the storage nodes within PVFS are called I/O servers and the cluster nodes that utilize the storage space from the I/O servers are called clients. A critical item in PVFS is how the data is transmitted from the I/O servers to the client. A very nice feature of PVFS is that you can use the existing cluster network for PVFS traffic as well as computational traffic. This dual use can save you money because an additional network for PVFS is not needed. This scenario also works well because typically applications don't perform computations at the same time they perform I/O. Therefore, in most cases, you won't be taking away computational bandwidth to perform parallel I/O.Given the cost of GigE (Gigabit Ethernet) today, it is quite feasible to build an inexpensive cluster network with good bandwidth and good latency for computational traffic and PVFS traffic. Many motherboards already come with built-in GigE. Additionally, GigE NICs (Network Interface Cards) with excellent performance are available at a very low cost.

If you need better I/O performance from PVFS or more bandwidth or lower latency from your network, there are two things you can do. You can upgrade the existing single network or you can add an additional network dedicated for PVFS.

Upgrading from something like GigE to Myrinet, Infiniband, Quadrics, or SCI will give you more bandwidth and lower latency for your computational traffic. In addition, since you can run TCP traffic over these networks, you will be able to utilize PVFS. Switching to a high performance network will obviously cost more, however if your computational loads require such a network, then application I/O (PVFS) performance will also be increased.

If you have a large cluster with multiple jobs, the I/O and computation may contend for the interconnect. computation and I/O at different times. In some cases the I/O servers, which may be doing computational work at the time (if you have designated the compute nodes as I/O nodes as well), will then have to use some of their CPU time, network capacity, and disk I/O performance for the PVFS traffic. This situation could negatively impact the performance of your code.

If you want to reduce the impact of PVFS traffic on the performance of your codes you can either switch to a higher performance network as mentioned above, or you can move the PVFS traffic to it's own network. This design avoids network congestion from computational traffic and allows PVFS to use any and all of the available network capability.

The basic concept in adding a second network for PVFS is to put the I/O servers on a separate network that the compute nodes (also called the clients) can see as well.

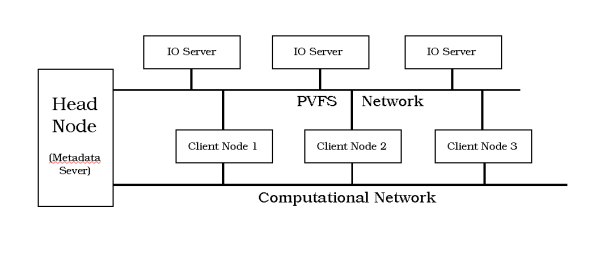

Figure One illustrates one option for configuring a cluster with separate I/O servers and clients and separate networks.

For either PVFS1 or PVFS2, I've chosen to have one head node that is also the single metadata server (PVFS2 can have multiple metadata servers). There are two separate networks that connect to the head node. One is the computational network for things like MPI traffic, and the other is for PVFS traffic. The computational nodes, also called the clients, can see both the computational network and the PVFS network. For simplification, it would be beneficial to put the I/O servers on a different subnet from the computational traffic.

The PVFS and PVFS2 websites have information on how to configure and start the metadata server (usually the head node on the cluster). This process varies by each version. You can also find information on starting the I/O servers as well.

The above configuration is just one of many. If you want the cluster nodes to be both I/O servers and clients, you can still use a separate network for PVFS traffic. The processors will be doing double duty to support the computations and the serve PVFS data, but this modification will reduce the burden on the computational network. Both configurations, separate I/O servers and clients and combined I/O servers and clients, have cost implications and performance implications which we will be considering next.