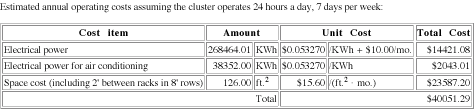

Figure Five shows operating costs based on electrical power consumption for the cluster itself, power consumption for the air conditioning unit, and rental of floor space using recent business power and space rental costs for Lexington, Kentucky. The power cost for this design is a significant fraction of the purchase price — electricity for powering and cooling the cluster costs approximately 16 % of the purchase price. In other regions these costs can be significantly higher. Electric rates in other regions of the U.S. are commonly twice those of Kentucky.

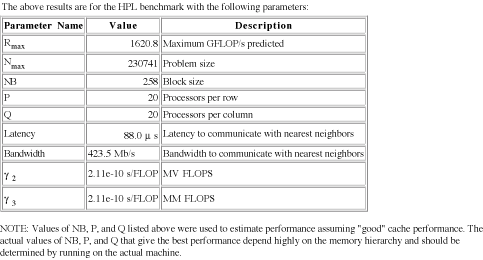

For each metric, the CDR outputs metric specific information. Figure Six shows the HPL-specific output for the top design. This design is expected to have an Rmax of 1,620 GFLOPS with an Nmax of 230,741 elements. This problem size is bigger than the desired problem size, because the HPL benchmark allows the problem to be scaled with the the cluster size to maximize performance. The metric function could be modified to use a fixed problem size if desired. The other parameters listed are HPL specific tuning parameters that the CDR used to determine Rmax. Actual performance may differ from predicted performance, but a comparison of the the HPL model used in the CDR with the machines on the Top 500 list shows that reported results typically fall within 20 % of the result predicted by the HPL model.

HPL has a trade-off between memory size and communication cost. Since the data size is allowed to increase with increasing memory size, it is difficult to know whether money is better spend adding nodes or adding memory. Interestingly, if we relax the constraint on how much memory is required, the fastest HPL cluster for $100,000 is a similar design. It has 248 nodes connected by a FNN of trees. Each node has only 512 MB of memory, giving the cluster just about 120 GB of usable memory. The smaller memory size allows more nodes to be purchased giving an Rmax of 1,1711 GFLOPS. It seems odd that the CDR chose a design with 2 GB per node when the optimal design for any memory size has 512 MB per node, and 1 GB per node would more than meet the memory requirement. However, balance between number of nodes and memory in the 1 GB design is such that it is slower than the 2 GB per node design and memory parts only come in discrete sizes.

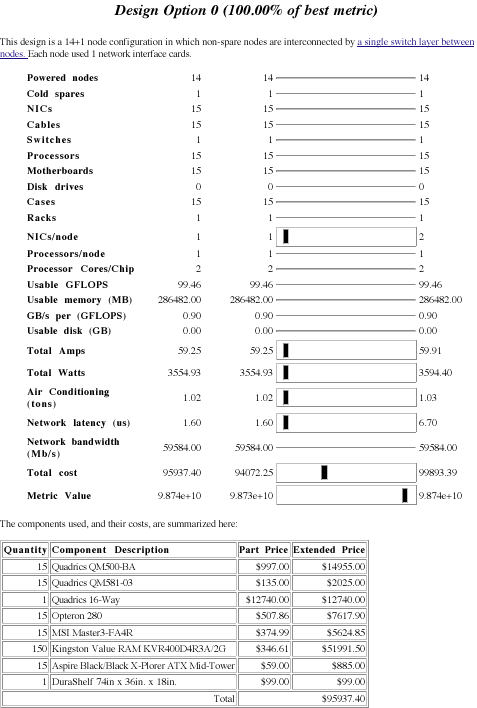

Many applications have additional constraints on resources available. For example, the above design described above takes 126 ft2 and needs 8.8 tons of air conditioning. Many researchers do not have that much space or air conditioning available for their cluster. If we constrain memory to 128 GB to 256 GB, limit floor space to two racks, air conditioning to three tons, and power to 60 A (four standard 15 A circuits) then the best design has only 14 powered nodes with an Rmax of 98.7 GFLOPS. There are several reasons for essentially losing an order of magnitude in performance, that we can see from the design statistics in Figure Seven. First, power is the limiting factor for this design. It takes only one of the two racks allowed and less than one third of the cooling budget but all of the power budget. To get enough memory into the few nodes that fit within the power budget, the CDR must choose more expensive 2 GB memory DIMMs; about half the budget is spent on DRAM.

If we relax the power budget and keep the two rack limit, performance improves dramatically — from 98.7GFLOPS to 466 GFLOPS. This speedup comes at a higher power cost. When power is not constrained it increases to 171 A, requiring at least 12 separate 15 A circuits. Moreover, the annual power and cooling costs are almost $4,600 vs. a little over $2,000 for the 60 A limit. This kind of analysis can help a buyer decide whether an air conditioning or power upgrade is worthwhile.

Conclusion

The CDR allows a designer to quickly evaluate many design options and trade-offs quickly. Without Pricing information makes these trade-offs possible. Without application requirements it is easy to spend a lot of money on hardware that does not help performance. Without price, the designer cannot tell how much performance is being given up in one area to buy more performance in another.We find the CDR useful in exploring the cluster design space when helping people design clusters, and we are working to add more features to improve usefulness. The web interface, available at http://aggregate.org/CDR makes the CDR easy to try for new applications. Source code is available through the BDR (Beowulf Design Rules) project on http://www.sourceforge.net/projects/bdr.

| References |

|

[DiMa00] Henry G. Dietz and Tim I. Mattox. KLAT2's flat neighborhood network. In Proceedings of the Extreme Linux track of the 4th Annual Linux Showcase, Atlanta, GA, USA, October 2000. USENIX Association. [HMLDH00] Thomas Hauser, Timothy I. Mattox, Raymond P. LeBeau, Henry G. Dietz, and P. George Huang. High-cost {CFD} on a low-cost cluster. In Proceedings of SC2000 Conference on High Performance Networking and Computing, Dallas, TX, USA, November 2000. ACM Press. [HoLW00] Adolfy Hoisie, Olaf Lubeck, and Harvey Wasserman. Performance and scalability analysis of teraflop-scale parallel architectures using multidimensional wavefront applications. International Journal of High Performance Computing Applications, 14(4):330–346, 2000. [Levi05] Jason Levitt. The Web Developer's Guide to Amazon E-commerce Service. Lulu Press, 2005. [PWDC04] Antoine Petitet, R. Clint Whaley, Jack J. Dongarra, and Andrew Cleary. HPL — a portable implementation of the high-performance linpack benchmark for distributed-memory computers. http://www.netlib.org/benchmark/hpl/index.html, January 2004. |