In the last two columns we learned a bit of the history of PVM (Parallel Virtual Machine), how to set up ssh as a remote shell that can be used by PVM to initiate remote PVM daemons within a cluster, and how to install and start up a "virtual machine" with PVM or its graphical front end, XPVM. We have done everything, in fact, but run an actual PVM task.

That's the the topic of this column. Today we will start with a very simple PVM job template, one that functionally duplicates our very first example based on perl (with threads) and ssh distributing a simple binary and using the shell itself to manage communications.

Before starting, whether or not you read the previous columns be sure that you have PVM installed on a large or small cluster and have ssh or rsh configured correctly so that PVM can be started from either the PVM console or the PVM GUI.

The Task: random_pvm

The task itself is to generate random numbers (badly) using PVM in a master/slave mode. This requirement means that we have to write two programs. First there is the master, which is responsible for starting up all the slave programs, passing them any required data, and retrieving their results one at a time as the random numbers are generated by the slaves. Then there is the slave, which naturally does all the work while the master sits around twiddling its figurative thumbs. Except that in PVM the master computer may well be a node in the virtual machine and may well run both a slave task and the master task, which is more democratic.To emulate our previous efforts in this regard, we need to be able to tell the master various things -- the number of random numbers to generate, the number of slaves to use to generate them, and an adjustable "delay" each slave should insert after generating each random number to simulate doing a controlled amount of work associated with each number returned to the master program. So we'll make all of these parameters command line arguments of the master program random_pvm_master with fairly obvious argument labels documented below.

With PVM, though, we'll be able to explore at a much finer level of detail than we could with a perl script using ssh as an interprocessor communications channel. We will find that our application, run to return each random number as it is generated in a message (and packet) all its own is very sensitive to things like latency (the minimum time between successive small packets sent by the network) and bandwidth (the maximum amount of data per unit time that can be sent on the network in maximally efficient packets). We will clearly be able to see the (mostly bad) things that happen when computation time per number drops below communication time per packet, so that the parallel computation is latency bound. It makes sense, therefore, to add a new feature to the program to show at least one solution to these bad things: aggregating data to be transmitted more efficiently in a bandwidth, not latency, limited mode.

So we'll add a flag (the -b flag) to cause all the random number data generated by the slaves to be "bundled" into a single message for transmission all at once. We will see that this can lead to a truly dramatic restoration of "good" scaling behavior in a parallel computation gone bad because of small packet latency. If I had a heavy manual, I'd whop you upside the head at the very instant you observe this as it is one of the Enlightening Lessons for this month's column! (Sorry, old Unix fortune joke, don't worry if you don't get it.)

That's really about it. The master program contains fairly straightforward code for parsing the command line, spawning the slaves, sending them some useful data (such as a unique random number seed for each one) and retrieving the results aggregated or one number at a time.

The slaves are even simpler -- they parse THEIR command line to get one number (the delay). This method demonstrates one way to pass PVM-spawned programs their arguments. They then receive the rest of their start up information as a PVM message, demonstrating another way to pass PVM-spawned programs their arguments. Then it is off to the races, generating random numbers and either sending them back one at a time or storing them up and returning them in a nice, long message.

We won't go over the PVM commands required line by line in this article, in part because the program itself is pretty thoroughly documented (as is PVM). For most programmers, it is easier to just start with a working template and learn from there than it is to try to "design" a program working directly from a language reference. Our approach will therefore be to start directly with a working program and get it running, trusting that good programmers will need little more to get them going on their own projects.

Getting The Source Code

I'd like to publish the source code for the example program right here in the column, but it has some 800 lines of code (including lavish comments, of course) plus a Makefile and a man page and a few other supporting files (and my editor is pretty tough about space:-). As the previous section indicates, the program isn't terribly complex - it is just that there are two programs - one for the master and one for the slave, and each has things like a getopt() based command line parser that are standard and easy to understand but that do take up space.Therefore your first step for this month's column is to retrieve the source code tarball from my personal website. You can also get it from Cluster Monkey as well.

Once you've obtained it, place the tarball in your usual source directory (I tend to use $HOME/Src) and unpack it with:

$ tar xvfz random_pvm.tgz

Now change into the random_pvm directory. Make sure that you have a PVM directory correctly set up in your home directory. Typically this would be $HOME/pvm3 and this directory should contain an architecture-specific binary directory such as $HOME/pvm3/bin/LINUXI386. If it does not, you may have to make these directory paths and may have to edit the Makefile if your version of PVM is different enough from mine that it uses different paths altogether.

I'm assuming that your home directory is NFS exported to your entire virtual machine, so that files copied into your PVM directory are available on all the nodes in the right place. If not, you may also have to arrange for the binaries to be copied into your PVM directory(s) on all of the nodes. Unfortunately you will probably need to use other documentation to see just how to proceed with this if your environment is complex or much different with mine, as this is intended to be a simple introduction.

Once the paths are correctly set up, you should be able to just enter make install in the random_pvm directory and the application pair should just build and install. I've deliberately kept the project self-contained and simple so that it is likely to build on most vanilla Linux systems without trouble. The install will copy both binaries into the PVM binary directory where the pvmd's on all the nodes should be able to find them.

Note Well! I get a warning from the build process on my system about sys_errorlist being deprecated telling me to use strerror instead. I presume that this warning is coming from the step that is linking the PVM library and is in the library itself. This error is ignorable, and will hopefully go away (or even have already gone away) in future PVM RPMs.

Running random_pvm_master

First, go ahead and use either the pvm console or the xpvm GUI to configure a virtual machine with 5 to 10 nodes (or more, of course). If you used the console, go ahead and quit (leaving the virtual machine running). If you used the GUI, leave it running in the background so you can "watch" it function.

| Sidebar One: Program Options |

|

To see the possible options enter:

$ random_pvm_master -h

Usage:

random_pvm [-b] [-d delay] [-n numslaves] [-r numrands] [-h] [-v]

-b toggles bundled communications.

-d delay sets the "cost" of generating a rand, in nanoseconds.

-n numslaves sets the number of slave tasks spawned.

-r numrands sets the number of random numbers generated.

Note that the delay loop is polling and hence expensive in CPU.

-v selects "verbose" operation for debugging, very noisy.

-h prints usage statement (this message) and exits.

to get a usage summary like this one. This option also tests that the binary itself is at least partly functional. The verbose mode is "interesting" but will mess up the timings given below as console I/O is very slow. It will also print out all the random numbers generated one or more times, so be cautious about using it for large numbers of rands. |

Now let's try to run the task in parallel from the command line, for the time being.

$ random_pvm_master -d 1000000 -n 1 -r 10000

1 10000 1000000 10.6066873749

This time should be closely reproduced on "any" system as it is determined mostly by the selected delay of a million nanoseconds, or around 0.001 seconds per random number generated. To generate 10000 thus takes a bit more than 10 seconds, where the bit more is overhead and communications (even with one node there are communications in PVM). Hmmm, one wonders if running it with two nodes might not be faster:

$ random_pvm_master -d 1000000 -n 2 -r 10000

2 10000 1000000 5.4214822691

Amazing! Astounding! It runs in a bit more than half the time, exactly as one might expect from reading previous columns. Dare we go to four?

$ random_pvm_master -d 1000000 -n 4 -r 10000

4 10000 1000000 2.8777537722

Again, a bit more than half the time or a quarter of the single node time -- nearly linear scaling. One expects that at some point this will tail over, but we haven't reached that point yet (and won't in this column).

Let's explore a different direction. At 0.001 seconds per random number, computation time is about 10x the packet latency; the program is still processor bound and hence likely to scale well. What if we shift the delay down to 0.0001 seconds per random number but generate ten times as many random numbers (so it should run in about the same amount of time?

$ random_pvm_master -d 100000 -n 1 -r 100000

1 100000 100000 13.8996855957

This is a reasonable number, although it is a bit longer than we expect. Something is not quite right. Let's shoot for two nodes:

$ random_pvm_master -d 100000 -n 2 -r 100000

2 100000 100000 30.9584868153

Whoa! This took longer than one node. A lot longer. We can understand why. We had to send 100,000 messages over the network (instead of 5000). Each message had a latency of perhaps 200 microseconds, so 100,000 of them take about twenty seconds to send. In addition, it takes around 10 seconds to "compute" the 100,000 rands at 100 microseconds each. Total: 30 seconds.

Things are really even more complex than this indicates when running on more than two nodes. For example, consider:

$ random_pvm_master -d 100000 -n 3 -r 100000

3 100000 100000 105.5901358108

96.110user 1.730sys 92.6%, 0ib 0ob 0tx 0da 0to 0swp 1:45.60

$ random_pvm_master -d 100000 -n 4 -r 100000

4 100000 100000 17.1603190026

Clearly something evil and nonlinear is happening where some of the node messages are considerably delayed and things are less predictable than one would like. Latency bound parallel computations are notorious for being sensitive to state -- things like the order of the communications and how busy the network is start to really matter. My test network isn't the quietest one in the world, but neither is it so noisy that such wide variations in runtime would occur if the network weren't jammed.

However, we can make our difficulties magically go away. Let's try the -b flag, and send ALL the random numbers generated by each host in single messages, which the network stack will then efficiently break up into large packets and send at a rate limited by bandwidth, not latency. All 400,000-odd bytes requested by the master should take a bit less than 0.5 seconds to send, not twenty or more, and poof!

$ random_pvm_master -b -d 100000 -n 4 -r 100000

4 100000 100000 2.6034640411

Nearly perfect scaling relative to a ten second base computation time is restored!

These results are not all that random_pvm has to teach us, not by a long shot, but it is enough for now. As an exercise, use your cluster to explore various ranges of delay, number of slaves, and number of rands, with and without aggregation of the communications. You should be able to generate pretty graphs, and those graphs should be readily understandable on the basis of our earlier discussions of Amdahl's Law.

If nothing else, you should get the following out of this month's column:

- Computation time > Communication Time is Good (for speedup/scaling).

- Computation time <Communication Time is Bad.

- Aggregating communications is one very important way of shifting a task from communication bound (bad) back to computation bound (good).

That and a working example of a Real Parallel Program written with PVM, that is. Not too shabby.

And Now For a Bit of Fun...

To wrap up this month's column, let's run one more trivial example but look at it using XPVM (the pretty PVM GUI):

$ random_pvm_master -d 100000000 -n 6 -r 100; \

sleep 1; \

random_pvm_master -b -d 100000000 -n 6 -r 100

6 100 100000000 1.7201111265

6 100 100000000 1.7161686264

The first of these generates a run of only 100 random numbers from six hosts (but with a 0.1 second delay in between), sending each number back to the master is it is generated. The second does the same with aggregation. The sleep in between is to separate the two runs in the XPVM trace.

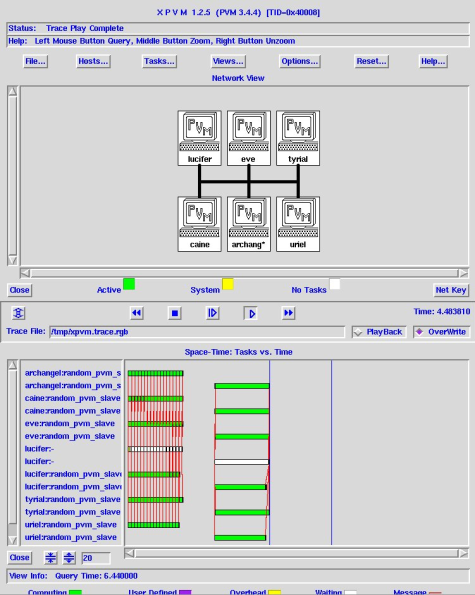

Examine the traces of these two tasks on your cluster with XPVM (see e.g. Figure One) and you will note that the first job is represented by six very busy green bars (the slave tasks) each talking to the mostly idle white bar (the master task) with the communications represented by lots of little red threads. Remember, the red threads are fun but "bad" from the point of view of scaling.

The second job is also six green bars for the six slaves working full time, a truly thumb-twiddling idle white master, and just one red thread at the end where the results are shot back. At this (computation bound) scale, there is no meaningful difference in runtime, but imagine the dense forest of red that would result on this timescale if we were sending back thousands of rands per second from each of the nodes. Eventually it literally saturates the ability of the network to keep up and the task is liberally and somewhat unpredictably delayed.

That's it for this month. At this point you are ready to become a real parallel programmer; random_pvm can easily be hacked into all sorts of master/slave parallel tasks both useful and just for fun. In future columns we may return to PVM and consider other programming models -- and maybe even more PVM commands!

| Sidebar Two: PVM Resources |

|

PVM Home Page

PVM Users Guide PVM: A User's Guide and Tutorial for Networked Parallel Computing, Geist, Beguelin, Dongarra, Jiang, Manchek and Sunderam (MIT press)

|

This article was originally published in ClusterWorld Magazine. It has been updated and formatted for the web. If you want to read more about HPC clusters and Linux, you may wish to visit Linux Magazine.

Robert Brown, Ph.D, is has written extensively about Linux clusters. You can find his work and much more on his home page