Why Simulate An ABI? Portability!

The MPI standard specifies a programming-interface (API), not a binary interface (ABI). This API allows applications to be compiled against any (standard-conforming) MPI-implementation without any changes to the source-code.

Once compiled against a specific MPI implementation however, the application also needs to be launched using the launch-scripts that come with this particular MPI-implementation. Switching to another launching-mechanism requires the user to relink the application to the corresponding MPI-library. In general, this re-linking is not possible without first recompiling the application, because different MPI-implementations are not guaranteed to be binary compatible.

The MPI-standard specifies an API and not an ABI because, for instance, it does not define the layout of the MPI_Comm objects in memory nor does it define the exact value of MPI_ERR_COMM. The consequence of this design leaves plenty of flexibility (for optimization) to the MPI implementers.

Through striving for optimal performance, the MPI standard reduces portability, however. The MPI-standard forces applications to be launched using the same MPI-implementation as the one they were compiled against. This is no problem when the application is compiled and launched on the same machine. However this is a severe constraint for shrink-wrapped software.

| What is a platform? |

A platform-definition list the requirements on hard- and software-environment to be able to run an executable. These requirements mainly specify:

|

Shrink-wrapped software is only available on a limited number of platforms which are selected by the application-developer. The HPC-world, in which MPI is mainly used, consists of many diverse platforms in contrast to the many X86 mainstream applications (e.g. office applications). Additionally, HPC applications are less portable compared to office-type applications: office applications do not care if they are being run on an Intel EM64T processor or AMD Opteron. HPC executables however, are generally not portable among these two processors because they would either exploit SSE3 or 3DNow instructions that are not supported by all processors. Because the application executables are linked to a specific MPI-library and run-time environment vendors find it difficult to provide shrink-wrapped HPC software optimized for the user's platform.

The advantages and disadvantages of standardizing an ABI for MPI have been discussed for many times. During one of those discussion on the beowulf-mailing list, a solution was put forward to implement a library on top of the existing MPI-implementations that would be able to provide binary compatibility [1] which Jeff Squyres coined MorphMPI. And because at Free Field Technologies [2] we regularly get requests to support yet another MPI-implementation, we finally decided to implement MorphMPI.

How Does It Work?

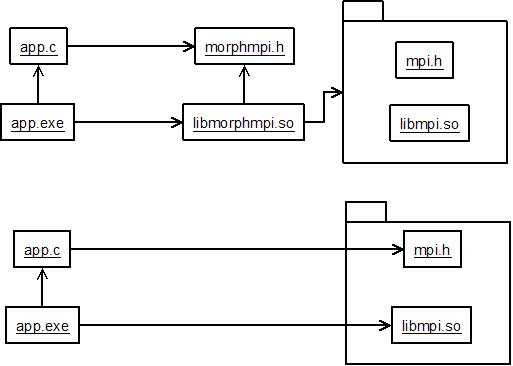

All problems in computer science can be solved by another level of indirection (D.Wheeler).MorphMPI does exactly this. MorphMPI is a library on top of MPI. Applications using MorphMPI have no header-dependency on MPI and MorphMPI itself can be recompiled to use any MPI implementation. Thus object-code using MorphMPI can be linked with any MPI implementation.

MorphMPI comes with a morphmpi.h header that contains all of the MPI symbols but prefixed with MorphMPI instead of MPI. The object-code of the application thus only contains references to MorphMPI-symbols. After linking the object-code to the MorphMPI-library and executing, the application will call MorphMPI-functions using MorphMPI-constants. At run-time, the morphmpi library will forward the MorphMPI-calls to actual MPI-calls while translating the MorphMPI-constants and passing on the corresponding MPI-objects. For instance, when the application calls

MorphMPI_Comm_size(MorphMPI_COMM_WORLD,p_size) ;the MorphMPI_Comm_size function will first lookup the equivalent of the

MorphMPI_COMM_WORLD communicator and than call MPI_Comm_size. In this case, a pre-defined communicator was used but a user-defined communicator could have been used as well. To be able to translate user-defined communicators, MorphMPI must map every MorphMPI-communicator to its equivalent MPI-communicator. This mapping is done in the communicator-constructor. For instance when calling

MorphMPI_Comm_dup(MorphMPI_COMM_WORLD,p_my_comm) ;the equivalent MPI-function will be called with

MPI_COMM_WORLD as first argument and a pointer to a new variable of type MPI_Comm. After MPI_Comm_dup returns successfully, a new MorphMPI_Comm will be created and mapped to the newly created MPI_Comm. Thus, the next time MorphMPI_Send is called with this new MorphMPI-communicator, MorphMPI will be able to translate it to the equivalent MPI-communicator. Same goes for the datatype that is used in the MorphMPI_Send while the other arguments are forwarded to the MPI_Send function as is.

MorphMPI does not only provide an equivalent for the MPI C-interface but also the Fortran-interface. The Fortran interface is implemented in C and therefore relies on the C-interface of MorphMPI. This design ensures that MorphMPI should be able to translate the Fortran-specific constants such as MPI_COMPLEX in C. However not all MPI-implementations provide these constants in their C-interface.

Using MorphMPI

To use MorphMPI, first the prefix of all MPI-symbols in the application should be replaced by MorphMPI. Prefixing all symbols with MorphMPI avoids having multiple definitions for all MPI-symbols at link-time and allows MorphMPI to easily call the equivalent MPI-functions. To replace all MPI-symbols with their equivalent MorphMPI-symbols, either a script can be used that comes with MorphMPI or the header morphornot.h can be included instead of including mpi.h. The morphornot.h header will include mpi.h unless the preprocessor-token MORPH is defined. If MORPH is defined, preprocessor directives will convert all MPI-functions and constants to their MorphMPI equivalent and will include morphmpi.h.

Next, the MorphMPI library must be compiled against an MPI-implementation of your choice. This library will than be able to translate all MorphMPI-function calls and MorphMPI-constants to the corresponding function/constant in the MPI-implementation of your choice.

Finally, the application should be linked to this MorphMPI-library and can then be launched using the launching-mechanism that comes with the MPI-implementation of your choice.

Availability

MorphMPI currently covers a large portion of the MPI-1.1 interface and a few functions of the MPI-2.0 interface.

MorphMPI has been tested using a large portion of the regression tests of MPICH2-1.0.5p4 and inside development versions of the finite element applications for (aero-)acoustics ACTRAN [3]. Since ACTRAN contains the MUMPS [4] solver, MUMPS as well as its dependency BLACS were morphed and tested.

MorphMPI is available under the LGPL and is hosted on sourceforge [5]. Actually it is essential to MorphMPI to make the source-code available because this allows users to compile and link morphed applications with any MPI-implementation.

An Extra Feature

In addition to being linked with any other MPI-implementation, MorphMPI need not necessarily be linked to another MPI implementation. In this case, MorphMPI will act as a fake MPI library. Launching an application that is linked against MorphMPI compiled in fake-mode, enables the application to call all MPI functions which will react conforming to the size of MPI_COMM_WORLD being 1.

Because MorphMPI tracks the creation and deletion of groups and communicators, it is also able to report if there were any group- or communicator-leaks when Finalize is being called. For instance, MorphMPI has detected several such leaks in the regression tests of mpich2-1.0.5p4 but (un)fortunately these leaks were already patched before being reported based on the leak-reports of MorphMPI.

Acknowledgments

This library was implemented at Free Field Technologies [2]. Thanks to Mathieu Gontier for revising the documentation and to Jeff Squyres and Tim Prins for feedback on this article.

| References |

Toon Knapen can be reached at toon.knapen ( a t ) telenet.be and would like to acknowledge Free Field Technologies for their assistance int his project.