Feel like ranting about something, I do

Good information about HPC clusters is hard to find. Not only is the market relatively small, but clusters are often home grown affairs that are off the radar screens of traditional market watchers. How then do we find out information on clusters? This very question prompted me, in the past, to place small single question polls on the ClusterWorld.com website (now inactive). And, because I like to test the validity of the prevailing notion of the day, I will continue to do small non-scientific polling here on ClusterMonkey. And, trust me here, the poll results, just add another log to that raging HPC fire called the Top500 debate (For the brave, search for "Top500" in the Beowulf Archives to get a taste of the debate.)

From the outset, it should be noted the polls were entirely unscientific (a respondent could easily defeat the "one vote one browser" by removing a cookie and there was no background on the population of responders). Never the less, there appears to be some nuggets of information in the data. As the polls progressed they usually developed an early trend that would hold as the number of respondents grew. New pools were not introduced until existing polls had over 100 respondents.

Unfortunately, the old ClusterWorld polls are no longer active. In this column, I would like to point out some trends that go against the market grain. Also, keep in mind that these kinds of polls are not definitive. I like to consider them as a 10,000 foot (3048 meters) view that may provide some general direction to the market.

In case you have not noticed, there are polls on the front page of ClusterMonkey. If you are interested, you can view all the ClusterMonkey polls. I still think polling the community in this way is interesting albeit not very scientific. These polls also include other surveys that seem interesting and include the the Council on Competitiveness/IDC surveys (real surveys by the way).

Bragging Rights

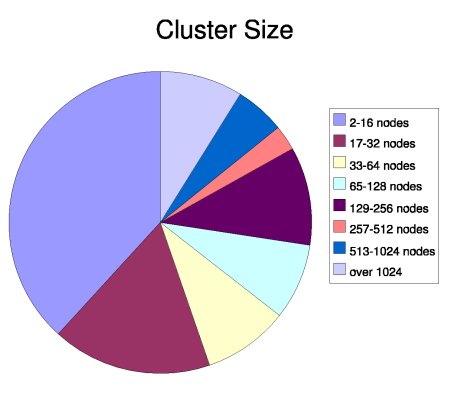

Very often, we hear about big clusters and their place on the Top500 list. Many believe that the Top500 is really a "high tech pissing contest." If you read the press releases, it is certainly used that way. In order to see just how relevant the Top500 is to most cluster users, let's take a look at Figure One where respondents were asked about cluster size. The most interesting result is the fact that clusters consisting of 32 nodes or less accounted for over 50% of all those surveyed.

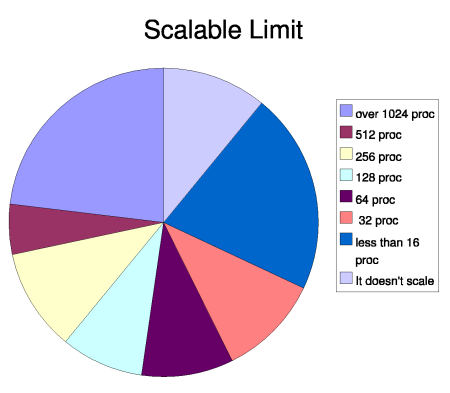

As an interesting follow-up, readers were asked what is the maximum scalability of their applications. In this poll, 50% of the respondents have applications that do not scale over 64 nodes, which means a larger faster cluster will do them no good. Of course they could run more copies of their parallel program on a larger cluster, but they are not using the combined capacity of all the processors as the Top500 seems to measure.

With these resulting in hand, one has to wonder why all the interest in the Top500? The processor count in the top ten systems is easily one or two orders of magnitudes greater than most codes can use. Why then, do most people care about this list? It is like using the results of a NASCAR race to help decide what kind of car I should buy.

In addition, the large number of smaller clusters also supports the notion that scalability limits many applications. When you think about it in these terms, the Top500 as a buying guide seems kind of silly.

The other interesting thing about the results is they seem to support the Blue Collar Computing idea. Indeed, at the high end, there seem to be plenty of "heroic" codes that use more than 1024 CPUs, a valley in the middle, and another maximum at 16 processors or less. This trend can best be seen by looking at the data plotted as a bar graph on the ClusterWorld.com site.

Of course, there are applications that justifiably need larger numbers of processors, but the focus on large clusters is more about press releases and less about getting work done. A case in point, when the Virginal Tech PowerMac cluster, System X, was being built, much fanfare was made about its fourth place finish on the Top500 list (Nov 2003). Today, I would be very curious to see the how those 2200 processors are being utilized. My guess is most of the jobs run on less then 64 processors. Alas, as of June of this year System X has dropped to 14th place. And, the the people that are actually using it, probably really don't really care.

Improving Things

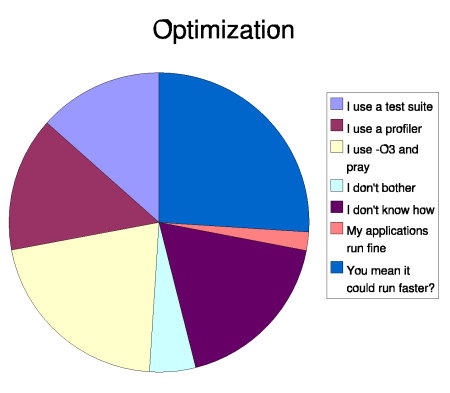

So much for the contest. An interesting question about optimization was asked in the polls. Optimization can take on many dimensions. To keep it simple, the question was asked "How do you optimize your cluster?" Indeed, how do you know the new kernel you just loaded is working like you would expect. Figure Three provides some data on how people handle this situation.Basically the majority of respondents do not do anything in this area. About 27% use a test suite or profiler. The rest just take what they can get. Interestingly 18% said they do not know how to optimize a cluster. Cheap and fast hardware is definitely a dis-incentive to optimizing, but with processor clocks stabilizing as multiple CPUs are packed on the die, optimization and scalability may take on a whole new meaning.

This situation is actually more troubling than one might imagine. The whole dual core approach to CPUs introduces yet another level of complexity to a cluster. For instance, is it better to run on 8 dual core nodes or 16 single core nodes? Indications are that there can be a big difference. If we do not know how to optimize for current clusters, then the multi-core designs will present an even bigger challenge.

So What is Stopping You?

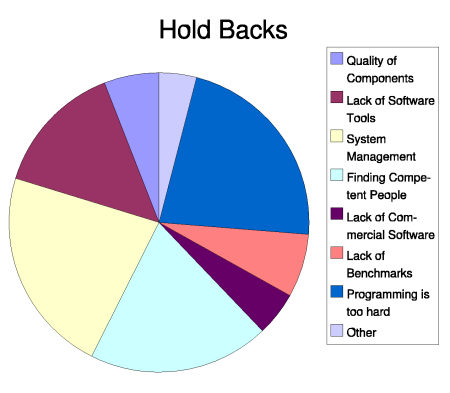

The final poll is about deterrents to cluster usage. Or, in a more politically correct sense, cluster challenges. Figure Four shows these results. The three big issues are people, programming and management. You could maybe lump software tools into the programming category which pretty much makes that the clear winner (or loser depending how you look at things).These results actually mirror what pretty much everyone in the cluster community knows -- clusters are hard to manage and program and finding good people is even more difficult. If clusters are going to become more mainstream, these issues need to be addressed.

No Conclusion

My goal is to shed some light on what is really important to the typical cluster user. The Top500 is a wonderful idea with valuable historic data. Unfortunately, a Top500 data point for a given cluster is of little value to the average "small scale" cluster user. According to the poll results, the using the Top500 as a yardstick for your cluster is not going to be effective. And, all the Top500 hoopla foisted on the market by vendors, is largely noise feeding into what I consider a busted myth.Finally, as stated, these polls are not very scientific, so I could be noise as well. The polls do suggest some trends that probably should be the focus of further surveys. Any real conclusions are tenuous at best. Rants are fine though.

| Sidebar One: Resources |

|

|

Douglas Eadline is the editor of ClusterMonkey.