Ethernet has been a key component in HPC clustering since the beginning. Over the years, interconnects like Myrinet and InfiniBand (and some others) have replaced Ethernet as the main compute interconnect largely due to better performance. High performance interconnects like InfiniBand are now the interconnect of choice for those that require performance and scalability.

With the availability of such high performance interconnects, one has to ask why do people still use Ethernet? The answer is three fold. First, Gigabit Ethernet (1000 Megabits/second) is "everywhere." Multiple Gigabit Ethernet (GigE) ports can be found on almost every server motherboard and users are comfortable with Ethernet technology. Second, it is inexpensive. The commodity market has pushed prices to the point where low node count clusters can expect Cat 5e cabling and switching costs to be between $10 and $20 per port. And finally, Ethernet is virtually plug-and-play. In other words, it just works.

In terms of applications, it all depends. With today's multi-core nodes, more pressure is put on the interconnect and a low performance interconnect can cause cores to wait on I/O. In some cases, however, the communication overhead is low and an Ethernet connection works just fine as a cluster interconnect.

In recent years, GigE has been struggling in terms of performance when used with modern processors. Recall that many of first HPC clusters used Fast Ethernet (100 Megabits/second) and hit a performance wall as compute nodes got faster. The market is presenting a similar situation with the cross-over from GigE to 10 Gigabit Ethernet (10,000 Megabits/second). Thus, one can expect a large number of 10 Gigabit Ethernet (10 GigE) clusters in the coming year.

Recently, I wrote a white paper for Appro International entitled High Performance Computing Made Simple: The 10 Gigabit Ethernet Cluster (short registration required) that discusses the state of 10 GigE clustering and provides some recipes for a basic cluster using 10 GigE. I provide many of the hardware options including switches, NICs, and cabling (including RJ-45 connectors and Cat6 cabling solutions). In the remainder of this article, I will highlight some sections of the white paper. For complete coverage, download the free white paper.

10 GigE Is Here

The big news in Ethernet technology is not necessarily that 10 Gigabit Ethernet is available (it has been for years), but rather that prices have come down enough for users to consider deploying it in clusters. In addition, the ability to use Cat6 cabling and RJ-45 connectors reduces costs and allows for a familiar cabling solutions.If history is a guide, 10 GigE will continue growing into the HPC cluster market, however, I do not believe in all-or-nothing scenarios (at least in HPC). InfiniBand (IB) is not going anywhere. There is room for IB and 10 GigE, just like there are different types of cars. They both get you where you want to go, but depending on your needs and budget, the one that is right for you may not be the best choice for the next guy. Therefore, because I'm talking about 10 GigE does not mean I am prediction the demise of IB. More like I am predicting the demise of GigE use in clusters -- the interconnect used in 56% of the recent Top500 list.

The use of Ethernet in clusters is due to the following rule of thumb, Speed, Simplicity, Cost, pick any two. I believe users who find simplicity and cost important will chose 10 GigE. IB is already faster and has better latency and if you need this level of performance you are not even looking in the Ethernet direction. The joy of clustering is that one size does not fit all and you can build your cluster around your needs.

Keep It Simple

When it comes to clusters, keeping it simple is often the preferred approach. Keeping things simple also implies using the best of breed hardware and software. Cutting corners with cheap hardware always ends up costing more in the long run and complicates operations.Making the right choices for nodes and network can be very important. Indeed, simplicity implies "plug and play" capabilities that require minimal configuration or tuning. In addition, making sure the node and network is balanced ensures that the processors are reaching their fullest potential. This aspect is often overlooked when specifying a cluster. Working within a fixed budget is often an exercise in balancing the cost or number of nodes to the quality of interconnect. In general, if Ethernet will support your applications, then it may be possible to purchase more nodes. If on the other hand, you require a more expensive high performance interconnect, you may need to purchase less nodes to stay within your budget.

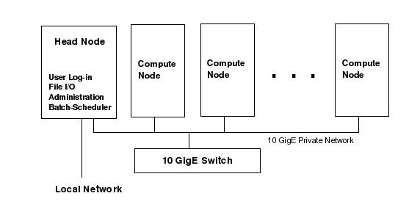

A simple cluster design is shown in Figure One. There is a single head node that has access to the LAN and provides log-in access for the users. There are a number of compute nodes where the actual work is performed. A private Ethernet network is used to communicate between the head node and the compute nodes. The head node also provides services to the compute nodes including NFS, monitoring, and job scheduling. Note that with Ethernet, it is possible to have a single network for all types of traffic (MPI, NFS, monitoring etc.) Typically, the head node has more storage than the compute nodes. It is possible to use separate servers and networks for these tasks, but using using a high performance 10 GigE interconnect allows for a more simple and cost effective design.

In addition to offering a familiar technology, 10 GigE also has the ability to offer wide functionality, increased performance, and at the same time reduce cost. The transition to 10 GigE for HPC is considered by many to be similar to the natural progression from Fast Ethernet to 1 Gigabit Ethernet. Concerns over performance when compared to InfiniBand are minimal for many popular applications (Fluent, LS-Dyna). Using similar hardware, 10 GigE performance is within 10% of a 4x DDR InfiniBand solution for these applications.

Another popular feature of Ethernet that is retained in 10 GigE is the use of low cost twisted pair cabling and RJ-45 connectors. The standard Category 6 (Cat-6) cable allows a small bend radius, is lightweight, and simple snap-in connectors. This type of cabling is now available for 10 Gigabit Ethernet and is called 10GBASE-T. Using Cat-6 (or possibly Cat-5e) cables 10GBASE-T provides a distance of up to 55 meters, which can be extended to 100 meters using Cat-6a cabling.

In addition to traditional copper cabling, the popular SFP+ (Small-Form-Factor Pluggables) allows for both optical and copper cables to be used for the 10 GigE network. A discussion of both optical and copper cabling options (including suggested model numbers) is covered in the white paper: High Performance Computing Made Simple: The 10 Gigabit Ethernet Cluster.

Conclusion

The use of 10 GigE may not fit everyone's needs. For those who want a simple cluster with minimal configuration, however, 10 GigE may be worth a look. In general, 10 GigE is best suited for smaller node counts (50 or less) as high port count switches are still not available or excessively expensive.The ease of implementing a 10-GigE cluster is perhaps what is most attractive. A single interconnect can be used for all nodes and if desired, standard RJ-45 and Cat-6 cabling can be employed. There is of course some tuning that may be required beyond the plug and play aspects of Ethernet, but baseline functionality is easy to achieve.

For the high end user, other high performance interconnect technologies are still available, but for users who require simplicity and low cost, 10GigE may be the best choice. You can learn more about 10 GigE by downloading the white paper.